In this blog, we will delve into Kubernetes CRD (Custom Resource Definition) and Operator with hands-on examples.

When we build projects, we often hope to have a useful framework that can provide a series of tools to help developers create, test, and deploy more easily. There is such a framework for the scenarios of CRD and Operator, and it’s called Kubebuilder.

Kubernetes CRD

There are several built-in resources in Kubernetes, such as pods, deployments, configmaps, services, etc., which are managed by the internal components of K8s.

In addition to these built-in resources, K8s also provides another way for users to customize resources at will, known as CustomResourceDefinitions (CRD). For instance, with CRD, I can define resources like “mypod,” “myjob,” “myanything,” and so on.

There are several built-in resources in Kubernetes, such as pods, deployments, configmaps, services, etc., which are managed by the internal components of K8s.

In addition to these built-in resources, K8s also provides another way for users to customize resources at will, known as CustomResourceDefinitions (CRD). For instance, with CRD, I can define resources like “mypod,” “myjob,” “myanything,” and so on.

It should be noted that the term “CRD” has different meanings in different contexts.

Sometimes, it may only refer to “CustomResourceDefinitions,” which is a specific resource in K8s, and at other times, it may refer to the custom resources created by users using CRD.

In a narrow sense, “CRD” (full name CustomResourceDefinitions) is a special built-in resource in K8s, through which we can create other customized resources.

For example, we can use CRD to create a CronTab resource called:

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

# Name must match <plural>.<group>

name: crontabs.stable.example.com

spec:

# group name, used for REST API: /apis/<group>/<version>

group: stable.example.com

versions:

- name: v1

served: true

storage: true

schema:

openAPIV3Schema:

type: object

properties:

#Define attributes

spec:

type: object

properties:

cronSpec:

type: string

image:

type: string

# Scope can be Namespaced or Cluster

scope: Namespaced

names:

# # Plural name, used in URL: /apis/<group>/<version>/<plural>

plural: crontabs

# Singular name, can be used in CLI

singular: crontab

# # Camel case singular, used for resource lists

kind: CronTab

# Name abbreviation, can be used in CLI

shortNames:

- ct

Once we apply this YAML file, our custom resource “CronTab” will be registered in K8s.

At this point, we can operate this custom resource at will, for example, using the file “my-crontab.yaml”:

apiVersion: "stable.example.com/v1"

kind: CronTab

metadata:

name: my-new-cron-object

spec:

cronSpec: "* * * * */5"

image: my-awesome-cron-image

Execute

kubectl apply -f my-crontab.yaml to create our customized CronTab, and execute

kubectl get crontabto query our customized CronTab list.

The advantage of using CRD custom resources is that we do not need to worry about the data storage of custom resources, nor do we need to implement an additional HTTP server to expose the API interfaces for operating these custom resources. Kubernetes has already handled these aspects for us.

We only need to interact with custom resources in a manner similar to other built-in resources.

Only

CRDis often not enough. For example, above we createdkubectl apply -f my-crontab.yamla crontab custom resource, but this crontab will not have any execution content (will not run any programs), and in many scenarios we hope that the custom resource can be executed.

This is when we need Operator it.

Operator

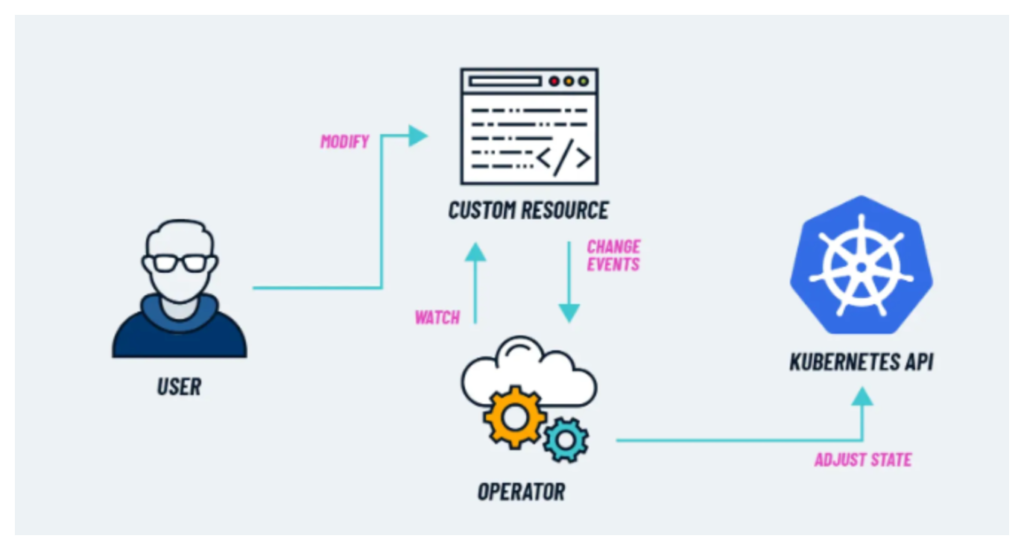

An Operator is essentially a custom resource controller. Its purpose is to monitor changes in custom resources and then execute specific operations in response.

For instance, upon detecting the creation of a custom resource, an Operator can read the properties of the custom resource, create a pod to execute a specific program, and associate the pod with the custom resource object

In what form does an Operator exist? In reality, it is similar to an ordinary service; it can be a deployment or a statefulSet.

In essence, what is commonly referred to as the Operator pattern is this model: CRD + custom controller.

Kubebuilder

When we embark on projects, we often seek a helpful framework that offers a set of tools facilitating developers in creating, testing, and deploying more efficiently. For scenarios involving CRD and Operator, such a framework exists – Kubebuilder.

Next, I will use Kubebuilder to create a small project. This project will generate a custom resource, “Foo,” and the controller will monitor changes to this resource, printing out the updates.

1. Installation

# download kubebuilder and install locally.

curl -L -o kubebuilder "https://go.kubebuilder.io/dl/latest/$(go env GOOS)/$(go env GOARCH)"

chmod +x kubebuilder && mv kubebuilder /usr/local/bin/

2. Create a test directory

mkdir kubebuilder-test

cd kubebuilder-test3. Initialise project

kubebuilder init --domain mytest.domain --repo mytest.domain/foo

4. Define CRD

Let’s suppose we want to define a CRD in the following format:

apiVersion: "mygroup.mytest.domain/v1"

kind: Foo

metadata:

name: xxx

spec:

image: image

msg: messageThen we need to create a CRD (essentially creating an API):

kubebuilder create api --group mygroup --version v1 --kind Foo

After execution, enter y to confirm the generation, and then kubebuilder will automatically create some directories and files for us, including:

api/v1/foo_types.goThis CRD is defined in the file (also API).internal/controllers/foo_controller.goThe file is the business logic that controls the CRD.

Since the automatically generated file is only a basic framework, we need to modify it accordingly to our own needs.

a. Modify the structure of CRD in code

First, modify api/v1/foo_types.go and adjust the structure of the CRD

// FooSpec defines the desired state of Foo

type FooSpec struct {

Image string `json:"image"`

Msg string `json:"msg"`

}

// FooStatus defines the observed state of Foo

type FooStatus struct {

PodName string `json:"podName"`

}

b. Automatically generate CRD yaml through commands

After executing the command kubebuilder make manifests , a file mygroup.mytest.domain_foos.yaml will be generated in the directory. config/crd/bases

This file is the yaml file in which we define CRD:

---

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

annotations:

controller-gen.kubebuilder.io/version: v0.13.0

name: foos.mygroup.mytest.domain

spec:

group: mygroup.mytest.domain

names:

kind: Foo

listKind: FooList

plural: foos

singular: foo

scope: Namespaced

versions:

- name: v1

schema:

openAPIV3Schema:

description: Foo is the Schema for the foos API

properties:

apiVersion:

description: 'APIVersion defines the versioned schema of this representation

of an object. Servers should convert recognized schemas to the latest

internal value, and may reject unrecognized values. More info: https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#resources'

type: string

kind:

description: 'Kind is a string value representing the REST resource this

object represents. Servers may infer this from the endpoint the client

submits requests to. Cannot be updated. In CamelCase. More info: https://git.k8s.io/community/contributors/devel/sig-architecture/api-conventions.md#types-kinds'

type: string

metadata:

type: object

spec:

description: FooSpec defines the desired state of Foo

properties:

image:

type: string

msg:

type: string

required:

- image

- msg

type: object

status:

description: FooStatus defines the observed state of Foo

properties:

podName:

type: string

required:

- podName

type: object

type: object

served: true

storage: true

subresources:

status: {}

The specific content of the instruction execution is defined in the file Makefile :

.PHONY: manifests

manifests: controller-gen ## Generate WebhookConfiguration, ClusterRole and CustomResourceDefinition objects.

$(CONTROLLER_GEN) rbac:roleName=manager-role crd webhook paths="./..." output:crd:artifacts:config=config/crd/bases

From this example, it becomes evident that the tool Kubebuilder is employed to utilize controller-gen for scanning the code.

It searches for comments in a specific format (such as //+kubebuilder:…) and subsequently generates a CRD YAML file.

5. Supplement controller logic

Suppose we intend to monitor the custom resource “Foo” created by the user and print out its properties.

a. Modify the controller to supplement business logic

Modify the file internal/controllers/foo_controller.go to supplement our own business logic, as follows:

func (r *FooReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) {

l := log.FromContext(ctx)

// Supplementary business logic

foo := &mygroupv1.Foo{}

if err := r.Get(ctx, req.NamespacedName, foo); err != nil {

l.Error(err, "unable to fetch Foo")

return ctrl.Result{}, client.IgnoreNotFound(err)

}

//Print Foo property

l.Info("Received Foo", "Image", foo.Spec.Image, "Msg", foo.Spec.Msg)

return ctrl.Result{}, nil

}

// SetupWithManager sets up the controller with the Manager.

func (r *FooReconciler) SetupWithManager(mgr ctrl.Manager) error {

return ctrl.NewControllerManagedBy(mgr).

For(&mygroupv1.Foo{}).

Complete(r)

b. Carry out testing

Note: The test requires a local or remote Kubernetes cluster environment, and it will use the same environment as the current kubectl by default.

Execute make install to register the CRD. In the Makefile, you can see that it actually executes the following instructions:

.PHONY: install

install: manifests kustomize ## Install CRDs into the K8s cluster specified in ~/.kube/config.

$(KUSTOMIZE) build config/crd | $(KUBECTL) apply -f -

Execute make run to run the controller. In the Makefile, you can see that it actually executes the following instructions:

.PHONY: run

run: manifests generate fmt vet ## Run a controller from your host.

go run ./cmd/main.goThen you can see the following output:

...

go fmt ./...

go vet ./...

go run ./cmd/main.go

2024-01-19T15:14:18+08:00 INFO setup starting manager

2024-01-19T15:14:18+08:00 INFO controller-runtime.metrics Starting metrics server

2024-01-19T15:14:18+08:00 INFO starting server {"kind": "health probe", "addr": "[::]:8081"}

2024-01-19T15:14:18+08:00 INFO controller-runtime.metrics Serving metrics server {"bindAddress": ":8080", "secure": false}

2024-01-19T15:14:18+08:00 INFO Starting EventSource {"controller": "foo", "controllerGroup": "mygroup.mytest.domain", "controllerKind": "Foo", "source": "kind source: *v1.Foo"}

2024-01-19T15:14:18+08:00 INFO Starting Controller {"controller": "foo", "controllerGroup": "mygroup.mytest.domain", "controllerKind": "Foo"}

2024-01-19T15:14:19+08:00 INFO Starting workers {"controller": "foo", "controllerGroup": "mygroup.mytest.domain", "controllerKind": "Foo", "worker count": 1}

Let’s submit a foo.yaml and try:

apiVersion: "mygroup.mytest.domain/v1"

kind: Foo

metadata:

name: test-foo

spec:

image: test-image

msg: test-messageAfter executing kubectl apply -f foo.yaml, you will observe “foo” printed out in the controller’s output.

2024-01-19T15:16:00+08:00 INFO Received Foo {"controller": "foo", "controllerGroup": "mygroup.mytest.domain", "controllerKind": "Foo", "Foo": {"name":"test-foo","namespace":"aries"}, "namespace": "aries", "name": "test-foo", "reconcileID": "8dfd629e-3081-4d40-8fc6-bcc3e81bbb39", "Image": "test-image", "Msg": "test-message"}

This is a simple example of using Kubebuilder.

🔥 [20% Off] Linux Foundation Coupon Code for 2024 DevOps & Kubernetes Exam Vouchers (CKAD , CKA and CKS) [RUNNING NOW ]

Save 20% on all the Linux Foundation training and certification programs. This is a limited-time offer for this month. This offer is applicable for CKA, CKAD, CKS, KCNA, LFCS, PCA FINOPS, NodeJS, CHFA, and all the other certification, training, and BootCamp programs.

$395 $316

- Upon registration, you have ONE YEAR to schedule and complete the exam.

- The CKA exam is conducted online and remotely proctored.

- To pass the exam, you must achieve a score of 66% or higher.

- The CKAD Certification remains valid for a period of 3 years.

- You are allowed a maximum of 2 attempts to take the test. However, if you miss a scheduled exam for any reason, your second attempt will be invalidated.

- Free access to killer.sh for the CKAD practice exam.

CKAD Exam Voucher: Use coupon Code TECK20 at checkout

$395 $316

- Upon registration, you have ONE YEAR to schedule and complete the exam.

- The CKA exam is conducted online and remotely proctored.

- To pass the exam, you must achieve a score of 66% or higher.

- The CKA Certification remains valid for a period of 3 years.

- You are allowed a maximum of 2 attempts to take the test. However, if you miss a scheduled exam for any reason, your second attempt will be invalidated.

- Free access to killer.sh for the CKA practice exam.

CKA Exam Voucher: Use coupon Code TECK20 at checkout

$395 $316

- Upon registration, you have ONE YEAR to schedule and complete the exam.

- The CKA exam is conducted online and remotely proctored.

- To pass the exam, you must achieve a score of 67% or higher.

- The CKS Certification remains valid for a period of 2 years.

- You are allowed a maximum of 2 attempts to take the test. However, if you miss a scheduled exam for any reason, your second attempt will be invalidated.

- Free access to killer.sh for the CKS practice exam.

CKS Exam Voucher: Use coupon Code TECK20 at checkout

Check our last updated Kubernetes Exam Guides (CKAD , CKA , CKS) :

Conclusion

Kubernetes’ CRD and Operator mechanism provide users with powerful scalability. CRD allows users to customize resources, and Operators can manage these resources.

It is this extension mechanism that provides the Kubernetes ecosystem with great flexibility and adaptability, enabling it to be more widely used in various scenarios.

References:

https://kubernetes.io/docs/concepts/extend-kubernetes/api-extension/custom-resources/

https://kubernetes.io/docs/concepts/extend-kubernetes/operator/